People believe what they see. But what if seeing should not always mean believing in this age of fast-evolving digital trickery? Last year, a realistic-looking video supposedly showing Ukrainian president Volodymyr Zelenskyy went viral across that country’s social media. In it, Zelenskyy encourages citizens to “lay down arms” against the Russian invasion.

Had Ukrainians fallen for this, the geopolitical consequences could have been severe. It was soon revealed as a deepfake – an AI-generated synthetic video with someone’s likeness inserted into source content. As Nepal rides the edge of increasing internet penetration and digital vulnerability, this ominously rising technology poses a creeping threat with far-reaching impacts on our upcoming elections, policy discourse, ethnic relations, national security and the very foundations of our belief system.

The art of fake believable reality

While basic editing to spread misinformation is not new, deepfakes herald manipulation at a new level – using artificial intelligence to automate faking visual and vocal realism. The algorithms behind them leverage advances in machine learning, especially generative adversarial networks (GANs) which pit algorithms against each other to refine outputs.

Essentially, a target person’s images and videos are fed as inputs into the GANs model. The generator AI creates fabricated footage trying to accurately imitate the source. Meanwhile, the discriminator AI compares outputs with real footage to catch flaws.

This cycle repeats endless times – slowly enhancing the quality of a digital imitation game. Over time, the neural networks assimilate nuances like exact lip movements, micro-expressions, skin blemishes, pulse rate, hoarseness or sneezes.

With enough data and processing power, synthesised footage eventually matches real video’s richness and idiosyncrasies. While today’s fakes may still seem slightly off, computer vision capacities are likely to bridge this gap soon.

While the current technology permits partial face replacement, future models could conjure fully AI-rendered humans indistinguishable from reality. Labels like ‘shallowfakes’ and ‘cheapfakes’ refer to simpler edits using existing imagery and apps to alter context.

These allow manipulating political speeches, humour dolls, and putting words in someone’s mouth without needing advanced techniques. Experts compare the democratised ability to doctor reality today as equivalent to early basic Photoshop filters in the 1990s progressing to advanced manipulations like puppets mastering motion and expressions using deep learning in the 2020s. The arms race between falsification and detection capabilities is only warming up.

Deepfakes position today

Since the first deepfake algorithm emerged in 2017 to swap celebrity faces into porn videos, the field has advanced rapidly from niche academic interest to mainstream applications. Last year, a Russian startup created a realistic deepfake of Elon Musk in a custom three-minute video that fooled many.

The ease of use around such ‘puppeteering’ of public figures to spread fake news poses huge risks. Meanwhile, apps like Reface, Zao and FaceSwap allow anyone to insert their or their friends’ faces onto existing videos and gifs with little effort.

Leading developers can now synthesise near photo-realistic digital humans like the one anchored by Disney for its Mandalorian series. Students with access to code and data create compelling deepfakes as class projects.

Specialised firms helping politicians ‘resurrect’ deceased leaders in synthetic speeches during events highlight the commercial side. The Indian government even plans to build a public database to aid the country’s world-leading face recognition industry and enable consent-based deepfakes.

The costs, computing power and AI skills required to generate convincing deepfakes have shrunk dramatically since 2017. Generator algorithms have grown over 200 times faster in 18 months reflecting swelling interest and investments.

Where only Hollywood-grade software could create decent deepfakes earlier, free consumer apps now suffice. Manipulating footage to alter context no longer needs advanced techniques, as apps like Impressions Kit allow spoofing humour dolls to mimic anyone’s body language.

Experts describe the field as having moved from a ‘benchtop to desktop’ scale in ease of access. Machine learning continues to exponentially enhance visual, vocal and behavioural realism in coordination with the data used. We stand at the cusp of synthesised content becoming indistinguishable from reality before the end of this decade to most viewers. Only better forensic authentication standards can catch up in this classic hare and tortoise chase.

Why Nepal is at risk?

Despite low digital literacy, Nepal has seen rapid growth in internet access fueled by mobile penetration and social media frenzy. About 65 per cent have internet access today, up from a mere 15 per cent in 2010.

On average, a Nepali spends close to seven hours per day on the mobile internet as per Nepal Telecom data. But digital literacy and the ability to authenticate sources before sharing sensational clips rarely keep up with this rapid technological transformation, especially for a demographic where Facebook and TikTok constitute an ‘entire internet’ experience.

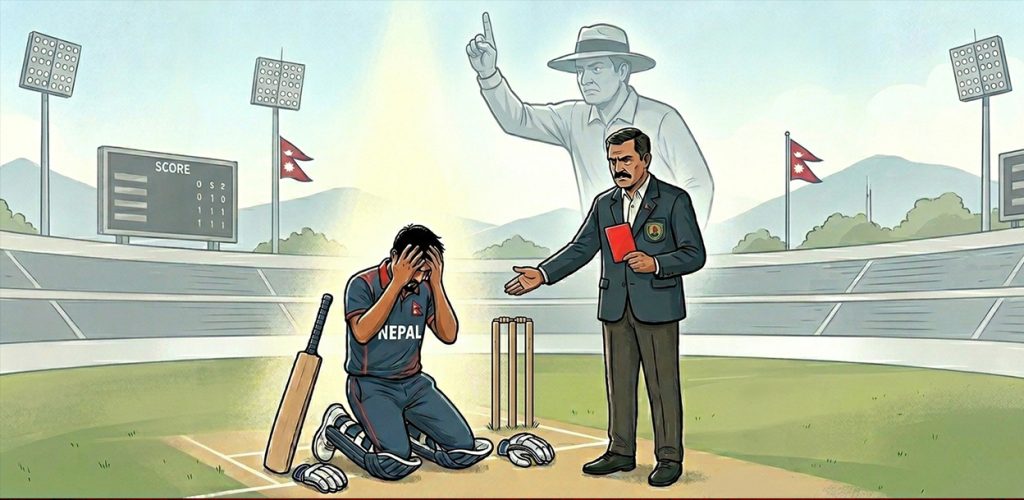

Our vulnerability is particularly high around elections which see heightened misinformation every cycle. Meanwhile, deepfake apps now allow users to generate pretty realistic footage without advanced knowledge. Soon anyone with a smartphone could ‘hack reality’ to malign political candidates before elections.

Even if eventually debunked, first impressions stick strongest. With experts confirming no reliable way currently to fully authenticate videos, the risk of manipulated content triggering unrest remains real – whether elections, racial tensions or other conflicts are amplified manifold through social media echo chambers. Believable fakes risk eroding the last vestiges of truth and trust.

While over 30 countries introduced anti-deepfake legislation since 2019, Nepal’s policy discourse largely ignores this gathering storm. Beyond research showing deepfakes potentially influencing electoral outcomes, they pose dire data privacy concerns without consent – especially threats of personal attacks, revenge porn, and identity theft among other dangers against unsuspecting individuals.

Technologists warn many malicious uses emerge before regulations can catch up. The deluge of misinformation around Covid-19 demonstrated glimpses of just how fast conspiracy theories take root in Nepal’s rumour mills.

Now imagine believable but edited footage depicting leaders, celebrities or ordinary persons going viral across Facebook groups and TikTok or any reel-sharing platform before any fact checker investigates. Our overburdened and under-resourced media ecosystem lacks resources for sophisticated verification to contain potential damage.

Solutions will involve individual and collective actions along with policy interventions. Enhancing digital literacy and public awareness campaigns form a first step so internet users do not unwittingly share manipulated footage without basic verification.

Just as anti-piracy alerts preceded movies, all media should caution against deepfake possibilities. The government, civil society and tech companies must collaborate urgently against this challenge. While expanded fact-checking capacities help, dedicated cyber security task forces are vital to identify and curb misinformation outbreaks before they go viral.

Legally, anti-deep fake penalties introduced by countries like China, South Korea, the USA and the UK offer models to adapt to Nepal’s context to deter malicious usages while enabling innovation. Defining deepfake to cover manipulated audio/video, explicit consent requirements before generating any personal imagery, penalties for non-consensual use and mandatory removals upon complaint offer a good starting point for discourse around regulations.

Safeguarding our fragile democracy

Deepfakes threaten to contaminate the info-environment by eroding the vestiges of truth. Unchecked, believable forgeries risk hijacking policy narratives around divisive issues to serve partisan agendas with severe societal consequences. The health of our democracy hinges upon shared truths and trust in information channels.

With both facing global crises today, Nepal needs urgent collective action to secure our digital future against technological threats that manipulate reality. As institutions deliberate guardrails against this ‘post-truth’ horizon, public vigilance against viral trickery forms the first line of defence. Malicious deepfakes bank on the tendency to share sensational content without verification.

Just as Covid made mask-wearing a vital habit, pausing to authenticate the media one share could well emerge as the hallmark of a vigilant digital citizen. Our actions online feed the spread of misinformation targeting democratic processes – be it elections or policy discourse. It calls for renewed commitment towards healthy information ecosystems – promoting literacy alongside literacy.

The next waves of technological threats bank on eroding public consensus around foundational facts and truths central to shared reality. Securing our democracy begins with securing perceptions against attempts at hacking reality. Both state and citizens need to collaborate urgently against this gathering storm of persuasive fakes usurping video as undisputed evidence.